TI-RTOS Overview¶

TI-RTOS is the operating environment for TI 15.4-Stack projects on CC13x0 devices. The TI-RTOS kernel is a tailored version of the legacy SYS/BIOS kernel and operates as a real-time, preemptive, multi-threaded operating system with drivers, tools for synchronization and scheduling.

Threading Modules¶

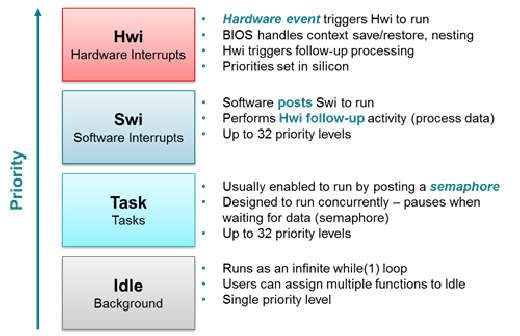

The TI-RTOS kernel manages four distinct context levels of thread execution as shown in Figure 16. The list of thread modules are shown below in a descending order in terms of priority.

Figure 16. TI-RTOS Execution Threads

This section describes these four execution threads and various structures used throughout the TI-RTOS for messaging and synchronization. In most cases, the underlying TI-RTOS functions have been abstracted to higher-level functions in util.c. The lower-level TI-RTOS functions are described in the TI-RTOS Kernel API Guide found here TI-RTOS Kernel User Guide. This document also defines the packages and modules included with the TI-RTOS.

Hardware Interrupts (Hwi)¶

Hwi threads (also called Interrupt Service Routines or ISRs) are the threads with the highest priority in a TI-RTOS application. Hwi threads are used to perform time critical tasks that are subject to hard deadlines. They are triggered in response to external asynchronous events (interrupts) that occur in the real-time environment. Hwi threads always run to completion but can be preempted temporarily by Hwi threads triggered by other interrupts, if enabled. Specific information on the nesting, vectoring, and functionality of interrupts can be found in the TI CC13x0 Technical Reference Manual.

Generally, interrupt service routines are kept short as not to affect the hard real-time system requirements. Also, as Hwis must run to completion, no blocking APIs may be called from within this context.

TI-RTOS drivers that require interrupts will initialize the required interrupts for the assigned peripheral. See Drivers for more information.

Note

Debugging provides an example of using GPIOs and Hwis. While the SDK includes a peripheral driver library to abstract hardware register access, it is suggested to use the thread-safe TI-RTOS drivers as described in Drivers.

The Hwi module for the CC13x0 also supports Zero-latency interrupts. These interrupts do not go through the TI-RTOS Hwi dispatcher and therefore are more responsive than standard interrupts, however this feature prohibits its interrupt service routine from invoking any TI-RTOS kernel APIs directly. It is up to the ISR to preserve its own context to prevent it from interfering with the kernel’s scheduler.

Software Interrupts (Swi)¶

Patterned after hardware interrupts (Hwi), software interrupt threads provide additional priority levels between Hwi threads and Task threads. Unlike Hwis, which are triggered by hardware interrupts, Swis are triggered programmatically by calling certain Swi module APIs. Swis handle threads subject to time constraints that preclude them from being run as tasks, but whose deadlines are not as severe as those of hardware ISRs. Like Hwis’, Swis’ threads always run to completion. Swis allow Hwis to defer less critical processing to a lower-priority thread, minimizing the time the CPU spends inside an interrupt service routine, where other Hwis can be disabled. Swis require only enough space to save the context for each Swi interrupt priority level, while Tasks use a separate stack for each thread.

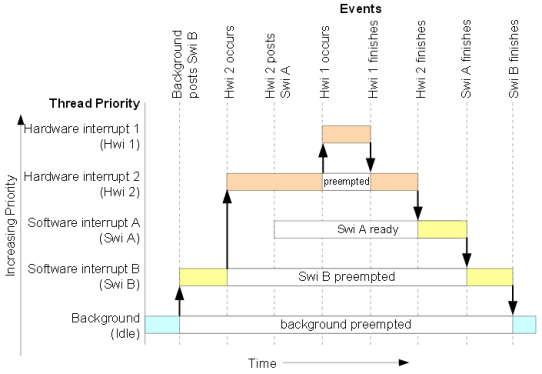

Similar with Hwis, Swis should be kept to short and may not include any blocking

API calls. This allows high priority tasks such as the wireless protocol

stack to execute as needed. It is suggested to _post() some TI-RTOS

synchronization primitive to allow for further post processing from within a

Task context. See Figure 17. to illustrate such a

use-case.

Figure 17. Preemption Scenario

The commonly used Clock module operates from within a Swi context. It is important that functions called by a Clock object do not invoke blocking APIs and are rather short in execution.

Task¶

Task threads have higher priority than the background (Idle) thread and lower priority than software interrupts. Tasks differ from software interrupts in that they can wait (block) during execution until necessary resources are available. Tasks require a separate stack for each thread. TI-RTOS provides a number of mechanisms that can be used for inter-task communication and synchronization. These include Semaphores, Event, Message queues, and Mailboxes.

See Tasks for more details.

Idle Task¶

Idle threads execute at the lowest priority in a TI-RTOS application and are executed one after another in a continuous loop (the Idle Loop). After main returns, a TI-RTOS application calls the startup routine for each TI-RTOS module and then falls into the Idle Loop. Each thread must wait for all others to finish executing before it is called again. The Idle Loop runs continuously except when it is preempted by higher-priority threads. Only functions that do not have hard deadlines should be executed in the Idle Loop.

For CC13x0 devices, the idle task allows the Power Policy Manager to enter the lowest allowable power savings.

Kernel Configuration¶

A TI-RTOS application configures the TI-RTOS kernel using a configuration

(.cfg file) that is found within the project. In CCS

projects, this file is found in the application project workspace under the

TOOLS folder.

The configuration is accomplished by selectively including or using

term:RTSC modules available to the kernel. To use a module, the .cfg

calls xdc.useModule() after which it can set various options as defined

in the TI-RTOS Kernel User Guide.

Some of the option that can be configured in the .cfg file include but are

not limited to:

- Boot options

- Number of Hwi, Swi, and Task priorities

- Exception and Error handling

- The duration of a System tick (the most fundamental unit of time in the TI-RTOS kernel).

- Defining the application’s entry point and interrupt vector

- TI-RTOS heaps and stacks (not to be confused with other heap managers!)

- Including pre-compiled kernel and TI-RTOS driver libraries

- System provides (for

System_printf())

Whenever a change in the .cfg file is made, you will rerun the XDCTools’

configuro tool. This step is already handled for you as a pre-build step in

the provided CCS examples.

Note

The name of the .cfg doesn’t really matter. A project should however only

include one .cfg file.

For CC26xx and CC13xx devices, a TI-RTOS kernel exists in ROM.

Typically for flash footprint savings, the .cfg will include the kernel’s

ROM module as shown in Listing 2.

/* ================ ROM configuration ================ */

/*

* To use BIOS in flash, comment out the code block below.

*/

if (typeof NO_ROM == 'undefined' || (typeof NO_ROM != 'undefined' && NO_ROM == 0))

{

var ROM = xdc.useModule('ti.sysbios.rom.ROM');

if (Program.cpu.deviceName.match(/CC26/)) {

ROM.romName = ROM.CC2640R2F;

}

else if (Program.cpu.deviceName.match(/CC13/)) {

ROM.romName = ROM.CC1350;

}

}

The TI-RTOS kernel in ROM is optimized for performance. If additional instrumentation is required in your application (tpyically for debugging), you must include the TI-RTOS kernel in flash which will increase flash memory consumption. Shown below is a short list of requirements to use the TI-RTOS kernel in ROM.

BIOS.assertsEnabledmust be set tofalseBIOS.logsEnabledmust be set tofalseBIOS.taskEnabledmust be set totrueBIOS.swiEnabledmust be set totrueBIOS.runtimeCreatesEnabledmust be set totrue- BIOS must use the

ti.sysbios.gates.GateMutexmoduleClock.tickSourcemust be set toClock.TickSource_TIMERSemaphore.supportsPrioritymust befalse- Swi, Task, and Hwi hooks are not permitted

- Swi, Task, and Hwi name instances are not permitted

- Task stack checking is disabled

Hwi.disablePrioritymust be set to0x20Hwi.dispatcherAutoNestingSupportmust be set to true- The default Heap instance must set to the

ti.sysbios.heaps.HeapMemmanager

For additional documentation in regards to the list described above, see the TI-RTOS Kernel User Guide.

Creating vs. Constructing¶

Most TI-RTOS modules commonly have _create() and _construct() APIs to

initialize primitive instances. The main runtime differences between the two

APIs is memory allocation and error handling.

Create APIs perform a memory allocation from the default TI-RTOS heap before initialization. As a result, the application must check the return value for a valid handle before continuing.

1 2 3 4 5 6 7 8 9 10 | Semaphore_Handle sem;

Semaphore_Params semParams;

Semaphore_Params_init(&semParams);

sem = Semaphore_create(0, &semParams, NULL); /* Memory allocated in here */

if (sem == NULL) /* Check if the handle is valid */

{

System_abort("Semaphore could not be created");

}

|

Construct APIs are given a data structure with which to store the instance’s variables. As the memory has been pre-allocated for the instance, error checking may not be required after constructing.

1 2 3 4 5 6 7 8 9 | Semaphore_Handle sem;

Semaphore_Params semParams;

Semaphore_Struct structSem; /* Memory allocated at build time */

Semaphore_Params_init(&semParams);

Semaphore_construct(&structSem, 0, &semParams);

/* It's optional to store the handle */

sem = Semaphore_handle(&structSem);

|

Thread Synchronization¶

The TI-RTOS kernel provides several modules for synchronizing tasks such as Semaphore, Event, and Queue. The following sections discuss these common TI-RTOS primitives.

Semaphores¶

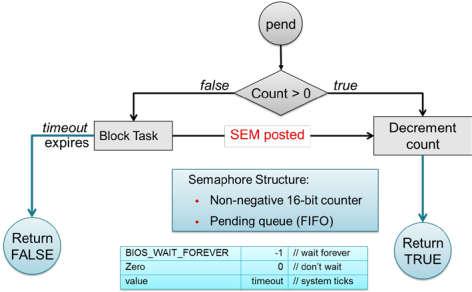

Semaphores are commonly used for task synchronization and mutual exclusions

throughout TI-RTOS applications. Figure 18. shows the semaphore

functionality. Semaphores can be counting semaphores or binary semaphores.

Counting semaphores keep track of the number of times the semaphore is posted

with Semaphore_post(). When a group of resources are shared between tasks,

this function is useful. Such tasks might call Semaphore_pend() to see if a

resource is available before using one. Binary semaphores can have only two

states: available (count = 1) and unavailable (count = 0). Binary semaphores

can be used to share a single resource between tasks or for a basic-signaling

mechanism where the semaphore can be posted multiple times. Binary semaphores do

not keep track of the count; they track only whether the semaphore has been

posted.

Figure 18. Semaphore Functionality

Initializing a Semaphore¶

The following code depicts how a semaphore is initialized in TI-RTOS. Semaphores can be created and contructed as explained in Creating vs. Constructing.

See Listing 3. on how to create a Semaphore.

See Listing 4. on how to construct a Semaphore.

Pending on a Semaphore¶

Semaphore_pend() is a blocking function call. This call may only be called

from within a Task context. A task calling this function will allow lower

priority tasks to execute, if they are ready to run. A task calling

Semaphore_pend() will block if its counter is 0, otherwise it will decrement

the counter by one. The task will remain blocked until another thread calls

Semaphore_post() or if the supplied system tick timeout has occurred;

whichever comes first. By reading the return value of Semaphore_pend() it is

possible to distinguish if a semaphore was posted or if it timed out.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | bool isSuccessful;

uint32_t timeout = 1000 * (1000/Clock_tickPeriod);

/* Pend (approximately) up to 1 second */

isSuccessful = Semaphore_pend(sem, timeoutInTicks);

if (isSuccessful)

{

System_printf("Semaphore was posted");

}

else

{

System_printf("Semaphore timed out");

}

|

Note

The default TI-RTOS system tick period is 1 millisecond. This default is

reconfigured to 10 microseconds for CC26xx and CC13xx devices by setting

Clock.tickPeriod = 10 in the .cfg file.

Given a system tick of 10 microseconds, timeout in

Listing 5. will be approximately 1 second.

Posting a Semaphore¶

Posting a semaphore is accomplished via a call to Semaphore_post(). A task

that is pending on a posted semaphore will transition from a blocked state to

a ready state. If no higher priority thread is ready to run, it will allow

the previously pending task to execute. If no task is pending on the semaphore,

a call to Semaphore_post() will increment its counter. Binary semaphores

have a maximum count of 1.

1 | Semaphore_post(sem);

|

Queues¶

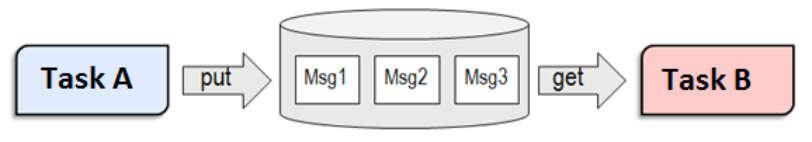

Queues let applications process messages in a first in, first out (FIFO) order. A project may use a queue to manage internal events coming from application profiles or another task. Clocks must be used when an event must be processed in a time-critical manner. Queues are more useful for message passing across various thread contexts.

The Queue module provides a unidirectional method of message passing between threads using a FIFO. In Figure 19. a queue is configured for unidirectional communication from task A to task B. Task A pushes messages into the queue and task B pops messages from the queue in order. Figure 19. shows the queue messaging process.

Figure 19. Queue Messaging Process

The TI-RTOS Queue functions have been abstracted into functions in the util.c

file. See the Queue module in the TI-RTOS Kernel User Guide for the

underlying functions. These utility functions combine the Queue module with the

ability to notify the recipient task of an available message through TI-RTOS Event

Module.

In CC13x0 software, the event used for this process is the same event that the given task uses for task synchronization through ICall. Queues are commonly used to limit the processing time of application callbacks in the context of the higher priority threads. In this manner, the higher priority thread queues a message to the application later processing instead of immediate processing in its own context.

Tasks¶

TI-RTOS Tasks are equivalent to independent threads that conceptually execute functions in parallel within a single C program. In reality, switching the processor from one task to another helps achieve concurrency. Each task is always in one of the following modes of execution:

- Running: task is currently running

- Ready: task is scheduled for execution

- Blocked: task is suspended from execution

- Terminated: task is terminated from execution

- Inactive: task is on inactive list

One (and only one) task is always running, even if it is only the idle task (see Figure 16.). The current running task can be blocked from execution by calling certain task module functions, as well as functions provided by other modules like Semaphores. The current task can also terminate itself. In either case, the processor is switched to the highest priority task that is ready to run. See the Task module in the package ti.sysbios.knl section of the TI-RTOS Kernel User Guide for more information on these functions.

Numeric priorities are assigned to tasks, and multiple tasks can have the same priority. Tasks are readied to execute by highest to lowest priority level; tasks of the same priority are scheduled in order of arrival. The priority of the currently running task is never lower than the priority of any ready task. The running task is preempted and rescheduled to execute when there is a ready task of higher priority.

Initializing a Task¶

When a task is initialized, it has its own runtime stack for storing local variables as well as further nesting of function calls. All tasks executing within a single program share a common set of global variables, accessed according to the standard rules of scope for C functions. This set of memory is the context of the task. The following is an example of the application task being constructed.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 | #include <xdc/std.h>

#include <ti/sysbios/BIOS.h>

#include <ti/sysbios/knl/Task.h>

/* Task's stack */

uint8_t sbcTaskStack[TASK_STACK_SIZE];

/* Task object (to be constructed) */

Task_Struct task0;

/* Task function */

void taskFunction(UArg arg0, UArg arg1)

{

/* Local variables. Variables here go onto task stack!! */

/* Run one-time code when task starts */

while (1) /* Run loop forever (unless terminated) */

{

/*

* Block on a signal or for a duration. Examples:

* ``Sempahore_pend()``

* ``Event_pend()``

* ``Task_sleep()``

*

* "Process data"

*/

}

}

int main() {

Task_Params taskParams;

// Configure task

Task_Params_init(&taskParams);

taskParams.stack = sbcTaskStack;

taskParams.stackSize = TASK_STACK_SIZE;

taskParams.priority = TASK_PRIORITY;

Task_construct(&task0, taskFunction, &taskParams, NULL);

BIOS_start();

}

|

The task creation is done in the main() function, before the TI-RTOS Kernel’s

scheduler is started by BIOS_start(). The task executes at its assigned

priority level after the scheduler is started.

TI recommends using an existing application task for application-specific

processing. When adding an additional task to the application project, the

priority of the task must be assigned a priority within the TI-RTOS

priority-level range, defined in the TI-RTOS configuration file (.cfg).

Tip

Reduce the number of Task priority levels to gain additional RAM savings in

the TI-RTOS configuration file (.cfg):

Task.numPriorities = 6;

Ensure the task has a minimum task stack size of 512 bytes of predefined memory. At a minimum, each stack must be large enough to handle normal subroutine calls and one task preemption context. A task preemption context is the context that is saved when one task preempts another as a result of an interrupt thread readying a higher priority task. Using the TI-RTOS profiling tools of the IDE, the task can be analyzed to determine the peak task stack usage.

Note

The term created describes the instantiation of a task. The actual TI-RTOS method is to construct the task. See Creating vs. Constructing for details on constructing TI-RTOS objects.

A Task Function¶

When a task is initialized, a function pointer to a task function is passed to

the Task_construct function. When the task first gets a chance to process,

this is the function which the TI-RTOS runs. Listing 7.

shows the general topology of this task function.

In typical use cases, the task spends most of its time in the blocked state,

where it calls a _pend() API such as Semaphore_pend(). Often,

high priority threads such as Hwis or Swis unblock the task with a _post()

API such as Semaphore_post().

Clocks¶

Clock instances are functions that can be scheduled to run after a certain

number of system ticks. Clock instances are either one-shot or periodic. These

instances start immediately upon creation, are configured to start after a

delay, and can be stopped at any time. All clock instances are executed when

they expire in the context of a Swi. The following example shows the

minimum resolution is the TI-RTOS clock tick period set in the TI-RTOS

configuration file (.cfg).

Note

The default TI-RTOS kernel tick period is 1 millisecond. For CC13x0

devices, this is reconfigured in the TI-RTOS configuration file (.cfg):

Clock.tickPeriod = 10;

Each system tick, which is derived from the real-time clock RTC, launches a Clock object that compares the running tick count with the period of each clock to determine if the associated function should run. For higher-resolution timers, TI recommends using a 16-bit hardware timer channel or the sensor controller. See the Clock module in the package ti.sysbios.knl section of the TI-RTOS Kernel User Guide for more information on these functions.

Drivers¶

The TI-RTOS provides a suite of CC13x0 peripheral drivers that can be added to an application. The drivers provide a mechanism for the application to interface with the CC13x0 onboard peripherals and communicate with external devices. These drivers make use of DriverLib to abstract register access.

There is significant documentation relating to each TI-RTOS driver located in the SimpleLink CC13x0 SDK. Refer to the SimpleLink CC13x0 SDK release notes for the specific location. This section only provides an overview of how drivers fit into the software ecosystem. For a description of available features and driver APIs, refer to the TI-RTOS API Reference.

Adding a Driver¶

Some of the drivers are added to the project as source files in their respective folder under the Drivers folder in the project workspace.

The driver source files can be found in their respective folder at $DRIVER_LOC\ti\drivers.

The $DRIVER_LOC argument variable refers to the installation location and can be viewed in the Project Options\ Resource\Linked Resources, Path Variables tab of CCS.

To add a driver to a project, include the C and include file of the respective driver in the application file (or files) where the driver APIs are referenced.

For example, to add the PIN driver for reading or controlling an output I/O pin, add the following:

#include <ti/drivers/pin/PINCC26XX.h>

Also add the following TI-RTOS driver files to the project under the Drivers\PIN folder:

- PINCC26XX.c

- PINCC26XX.h

- PIN.h

This is described in more detail in the following sections.

Board File¶

The board file sets the parameters of the fixed driver configuration for a specific board configuration, such as configuring the GPIO table for the PIN driver or defining which pins are allocated to the I2C, SPI, or UART driver.

The board files for the CC13x0 LaunchPad are in the following path:

<SDK_INSTALL_DIR>\source\ti\boards\<Board_Type>

TI 15.4-Stack uses board files found here:

C:\ti\simplelink_cc13x0_sdk_1_30_00_xx\examples\CC1350_LAUNCHXL\ti154stack\common\boards

<Board_Type> is the actual device. To view the actual path to the board files, see the following:

- CCS: Project Options→ Resources→ Linked Resources, Path Variables tab

In the path above, the <Board_Type> is selected based on a preprocessor symbol in the application project.

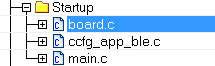

The top-level board file (board.c) then uses this symbol to include

the correct board file into the project. This top-level board file

can be found at

<SDK_INSTALL_DIR>\examples\rtos\CC13x0_LAUNCHXL\ti154stack\common\boards\board.c,

and is located under the Startup folder in the project workspace:

Board Level Drivers¶

There are also several board driver files which are a layer of abstraction on top of TI-RTOS drivers, to function for a specific board, for example Board_key.c. If desired, these files can be adapted to work for a custom board.

Creating a Custom Board File¶

A custom board file must be created to design a project for a custom hardware board. TI recommends starting with an existing board file and modifying it as needed. The easiest way to add a custom board file to a project is to replace the top-level board file. If flexibility is desired to switch back to an included board file, the linking scheme defined in Adding a Driver should be used.

At minimum, the board file must contain a PIN_Config structure that

places all configured and unused pins in a default, safe state and

defines the state when the pin is used. This structure is used to

initialize the pins in main() as described in Start-Up in main().

The board schematic layout must match the pin table for the custom board file

Improper pin configurations can lead to run-time exceptions:

PIN_init(BoardGpioInitTable);

See the PIN driver documentation for more information on configuring this table.

Available Drivers¶

This section describes each available driver and provide a basic example of adding the driver to the simple_peripheral project. For more detailed information on each driver, see the TI-RTOS API Reference.

PIN¶

The PIN driver allows control of the I/O pins for software-controlled general-purpose I/O (GPIO) or connections to hardware peripherals. As stated in the Board File section, the pins must first be initialized to a safe state (configured in the board file) in main(). After this initialization, any module can use the PIN driver to configure a set of pins for use.

Other Drivers¶

The other drivers included with TI-RTOS are: Crypto (AES), I2C, PDM, Power, UART, SPI, RF, and UDMA. The stack makes use of the power, RF, and UDMA, so extra care must be taken if using these. As with the other drivers, these are well-documented, and examples are provided in the SimpleLink CC13x0 SDK.